How to integrate AI models into the core of an application that learns automatically from user behavior

Beyond the API: Building a Smart, Independent Core

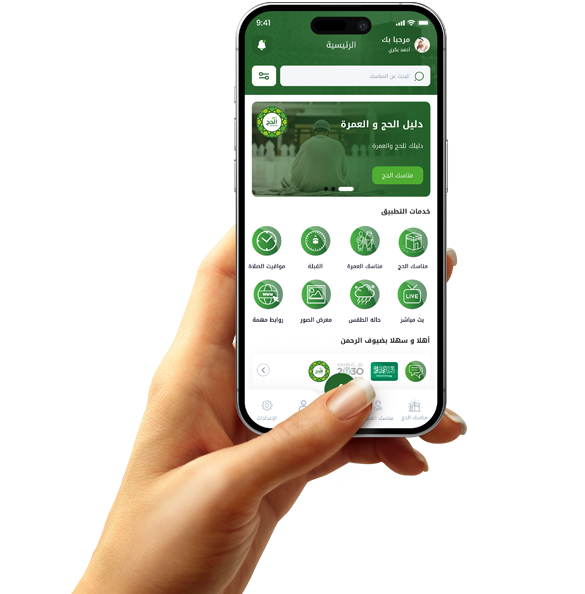

At Grand, we differentiate between an app that "talks" to ChatGPT and an app with a custom "model" built into its core. Integrating AI begins with choosing the right architecture, whether using on-device AI for speed and privacy, or connecting the core to powerful cloud processing engines. The idea is that the code doesn't wait for a user command to act; it monitors "silent data" (usage hours, browsing speed, interests) and starts building a unique digital profile for each user. This allows the app to "predict" the next step before the user even thinks about it.

Recommendation Engines as a Growth Engine

Artificial intelligence at the core of your application has the primary function of "super-personalization." When you program a professional collaborative filtering algorithm, the application can suggest products or content based on the similarity of tastes among thousands of users. This not only increases the time the customer spends within the application, but it also converts "data" into "money." The power here is that you make the application sell itself because it shows the customer "what they actually want" at the right time and place. This is the secret to the success of multi-million dollar platforms that rely on a continuous "engagement algorithm."

Continuous Learning Loops

Intelligent programming in 2026 relies on continuous learning loops. The application doesn't just take data; it "modifies itself" based on the results. If it suggests something and the user ignores it, the algorithm immediately understands that this is a "wrong path" and programmatically adjusts the data weights within the model. This process takes place in the background (Background Tasks) without affecting the phone's speed. Over time, you have a "genius" app whose expertise grows with each day of use, becoming a valuable technological asset whose worth increases with the amount of data it contains

Privacy and Performance: Balancing "Code Intelligence" with Device Resources

The biggest challenge we face at Grand is how to make the app intelligent without draining the battery or violating privacy. The modern trend is using Edge AI, where intelligent processing takes place on the user's device itself, not on remote servers. This provides incredibly fast response times and assures the user that their data doesn't leave their phone. When you program an app that respects customer privacy while simultaneously providing them with "intelligence" that simplifies their life, you're building a "trusted" and "advanced" tech brand, which is the pinnacle of programming professionalism.